\(\renewcommand\AA{\unicode{x212B}}\)

PoldiLoadRuns v1¶

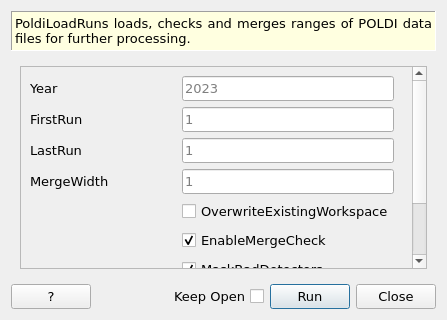

PoldiLoadRuns dialog.¶

Summary¶

PoldiLoadRuns loads, checks and merges ranges of POLDI data files for further processing.

Properties¶

Name |

Direction |

Type |

Default |

Description |

|---|---|---|---|---|

Year |

Input |

number |

2023 |

The year in which all runs were recorded. |

FirstRun |

Input |

number |

1 |

Run number of the first run. If only this number is supplied, only this run is processed. |

LastRun |

Input |

number |

1 |

Run number of the last run. |

MergeWidth |

Input |

number |

1 |

Number of runs to merge. |

OverwriteExistingWorkspace |

Input |

boolean |

False |

If a WorkspaceGroup already exists, overwrite it. |

EnableMergeCheck |

Input |

boolean |

True |

Enable all the checks in PoldiMerge. Do not deactivate without very good reason. |

MaskBadDetectors |

Input |

boolean |

True |

Automatically disable detectors with unusually small or large values, in addition to those masked in the instrument definition. |

BadDetectorThreshold |

Input |

number |

3 |

Detectors are masked based on how much their intensity (integrated over time) deviates from the median calculated from all detectors. This parameter indicates how many times bigger the intensity needs to be for a detector to be masked. |

OutputWorkspace |

Output |

Mandatory |

Name of the output group workspace that contains all data workspaces. |

Description¶

This algorithm makes it easier to load POLDI data. Besides importing the raw data (LoadSINQ v1), it performsthe otherwise manually performed steps of instrument loading (LoadInstrument v1), truncation (PoldiTruncateData v1). To make the algorithm more useful, it is possible to load data from multiple runs by specifying a range. In many cases, data files need to be merged in a systematic manner, which is also covered by this algorithm. For this purpose there is a parameter that specifies how the files of the specified range should be merged. The data files are named following the scheme group_data_run, where group is the name specified in OutputWorkspace and run is the run number and placed into a WorkspaceGroup with the name given in OutputWorkspace.

By default, detectors that show unusually large integrated intensities are excluded if the pass a certain threshold with respect to the median of integrated intensities in all detectors. The threshold can be adjusted using an additional parameter. Detectors that are masked in the instrument definition are always masked.

The data loaded in this way can be used directly for further processing with PoldiAutoCorrelation v5.

Usage¶

Note

To run these usage examples please first download the usage data, and add these to your path. In Mantid this is done using Manage User Directories.

To load only one POLDI data file (in this case a run from a calibration measurement with silicon standard), it’s enough to specify the year and the run number. Nevertheless it will be placed in a WorkspaceGroup.

calibration = PoldiLoadRuns(2013, 6903)

# calibration is a WorkspaceGroup, so we can use getNames() to query what's inside.

workspaceNames = calibration.getNames()

print("Number of data files loaded: {}".format(len(workspaceNames)))

print("Name of data workspace: {}".format(workspaceNames[0]))

Since only one run number was supplied, only one workspace is loaded. The name corresponds to the scheme described above:

Number of data files loaded: 1

Name of data workspace: calibration_data_6903

Actually, the silicon calibration measurement consists of more than one run, so in fact it would be better to load all the files at once, so the start and end of the range to be loaded is provided:

# Load two calibration data files (6903 and 6904)

calibration = PoldiLoadRuns(2013, 6903, 6904)

workspaceNames = calibration.getNames()

print("Number of data files loaded: {}".format(len(workspaceNames)))

print("Names of data workspaces: {}".format(workspaceNames))

Now all files from the specified range are in the calibration WorkspaceGroup:

Number of data files loaded: 2

Names of data workspaces: ['calibration_data_6903','calibration_data_6904']

But in fact, these data files should not be processed separately, they belong to the same measurement and should be merged together. Instead of using PoldiMerge v1 directly to merge the data files, it’s possible to tell the algorithm to merge the files directly after loading, by specifying how many of the files should be merged together. Setting the parameter to the value 2 means that the whole range will be iterated and files will be merged together in pairs:

# Load two calibration data files (6903 and 6904) and merge them

calibration = PoldiLoadRuns(2013, 6903, 6904, 2)

workspaceNames = calibration.getNames()

print("Number of data files loaded: {}".format(len(workspaceNames)))

print("Names of data workspaces: {}".format(workspaceNames))

The merged files will receive the name of the last file in the merged range:

Number of data files loaded: 1

Names of data workspaces: ['calibration_data_6904']

When the merge parameter and the number of runs in the specified range are not compatible (for example specifying 3 in the above code), the algorithm will merge files in the range as long as it can and leave out the rest. In the above example that would result in no data files being loaded at all.

A situation that occurs often is that one sample consists of multiple ranges of runs, which can not be expressed as one range. It’s nevertheless possible to collect them all in one WorkspaceGroup. In fact, that is the default behavior of the algorithm if the supplied OutputWorkspace already exists:

# Load calibration data

calibration = PoldiLoadRuns(2013, 6903)

# Add another file to the calibration WorkspaceGroup

calibration = PoldiLoadRuns(2013, 6904)

workspaceNames = calibration.getNames()

print("Number of data files loaded: {}".format(len(workspaceNames)))

print("Names of data workspaces: {}".format(workspaceNames))

The result is the same as in the example above, two files are in the WorkspaceGroup:

Number of data files loaded: 2

Names of data workspaces: ['calibration_data_6903','calibration_data_6904']

On the other hand it is also possible to overwrite an existing WorkspaceGroup, for example if there was a mistake with the previous data loading. The parameter needs to be specified explicitly.

# Load calibration data, forget merging

calibration = PoldiLoadRuns(2013, 6903, 6904)

# Load data again, this time with correct merging

calibration = PoldiLoadRuns(2013, 6903, 6904, 2, OverwriteExistingWorkspace=True)

workspaceNames = calibration.getNames()

print("Number of data files loaded: {}".format(len(workspaceNames)))

print("Names of data workspaces: {}".format(workspaceNames))

The data loaded in the first call to the algorithm have been overwritten with the merged data set:

Number of data files loaded: 1

Names of data workspaces: ['calibration_data_6904']

Categories: AlgorithmIndex | SINQ\Poldi

Source¶

Python: PoldiLoadRuns.py